Hi Everyone,

If you discover some heuristics that helped you to determine a better level of abstraction while coding, can you

place the heuristic here? Also maybe a description of the situation to which the heuristic applied?

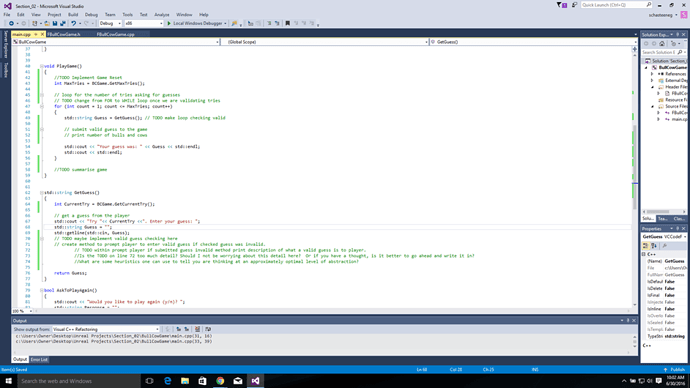

To start the conversation, I attached the following image of my psuedocode from Section 2 Lecture 29 “Psuedocode Programming”. I feel like my comments in the TODO on line 72 in the code below is going into too much detail, and that I should not include it there…I feel that if I omit line 72, that I would be at the “correct” level of abstraction, whatever that is. On the other hand, I feel like that detail makes sense, and that I am going to have to implement it

anyway. So I am just stuck on trying to figure out how to determine when I am going into too much detail, and when I am not. I suppose this is subjective, but I was hoping for some rules of thumb or some thoughts if this interesting to anyone. If you have any references to books, or articles, that would be okay too!

Of course, I will post any heuristics I find useful here as well.

Thanks!

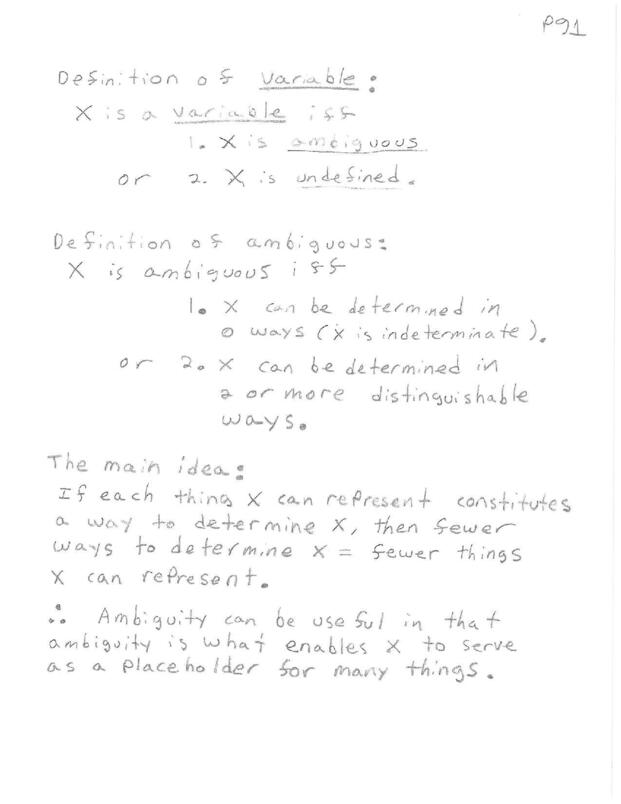

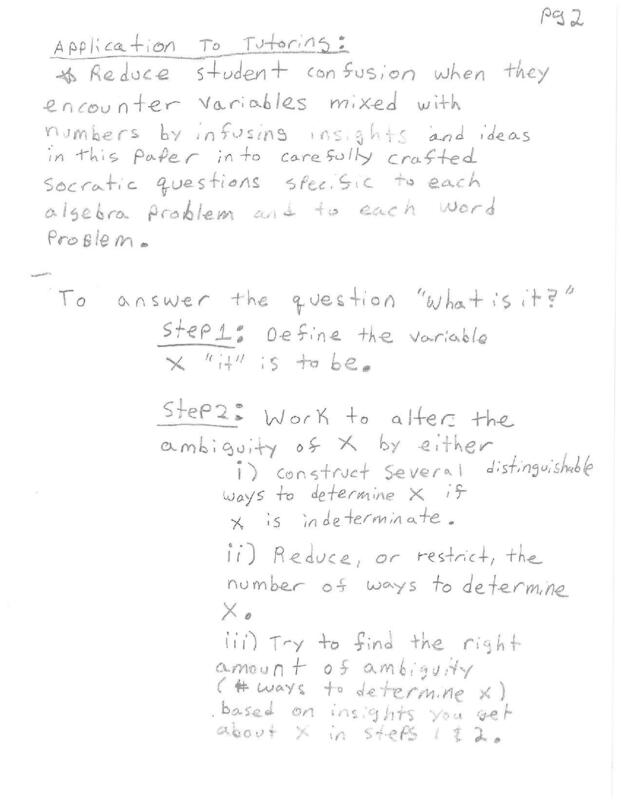

While working as a professional tutor, I found that a couple of students who were struggling with the concept

of variables in algebra were helped by a brief explanation of how ambiguity in variables is what makes variables useful, but also is what makes them confusing! A natural thought might be that more things a variable can represent, that is the more ambiguous the variable is, the less details we actually know about a particular variable and the less we know…but this actually not the case! Example: if we were to try to get a grip of what the human brain does by looking at the detailed movement of every single electron, atom, molecule in every neuron in the brain, we would have a low level of ambiguity, but would also be quite confused, where as if we abstract those details away in admittedly not perfect models of brain function of a given region, we can make some useful predictions. So in this case, more abstraction leads to better understanding. What I have noticed with my own learning, is that some concepts seem to have an optimal level of ambiguity, or level of abstraction, where the nature of the thing almost seems trivial, simple, and uninteresting, but that straying from that level of ambiguity just a little in either direction (more or less ambiguous) increases complexity but also resolution of the concept. This is all admittedly abstract, but this is a work in progress that I hope will become more refined over time.

Think “Level of Abstraction” = “Level of Ambiguity” in two JPEG files below, and thiat “Optimal Level of Abstraction” = “Optimal Level of Ambiguity” = “Optimal Number of distinguishable things or functions an object can be or do”. I am not sure if this makes sense in the context of abstraction as is used here in object oriented programming.